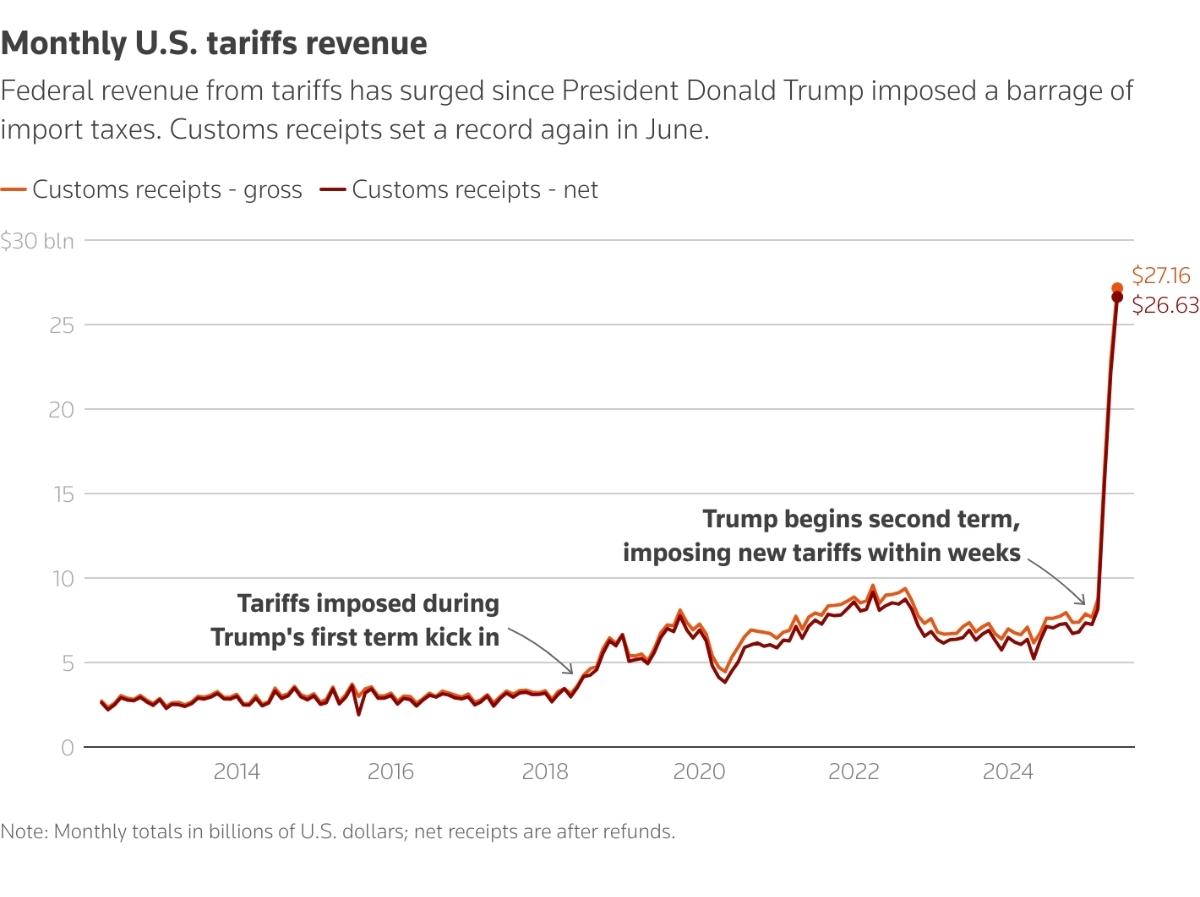

So one of the things I was working on as part of DeviceAtlas (but which ultimately didn’t get used) was a cache-conscious hash table in Java. It’s not unique in design – in fact it comes right out of a research paper written for the SPIRE 2005 conference by Nikolas Askitis and Justin Zobel – but the implementation was interesting to me as I’d not done optimisation work in Java in a while, and some things had changed quite a bit since I last wrote Java code. And it was a bit of an ego boost that I got it to outperform java.util.HashMap:

SUN HashMap fill: 57797 us SUN HashMap query: 165701 us, 0 errors CCHashTable fill (fair): 23205 us CCHashTable query (fair): 35513 us, 0 errors CCHashTable fill: 41723 us CCHashTable query: 43055 us, 0 errors

Of course, there are the minor criticisms that it’s nowhere near as general-purpose as the HashMap class and that HashMap is arguably exhibiting an intelligent design choice rather than cheating per se, but I like my ego so I’m going to ignore those arguments!

Looking at the more interesting bits, there’s the hash function. This came from more work by Zobel, this time with Ramakrishna for a database conference in Melbourne in 1997, and it’s a fairly simple design: for each character in the input string, left shift the interim hashcode, add the character, then right shift the interim hashcode and add that to the mix, then xor the whole lot with the interim hashcode and that’s your interim hashcode for the next iteration. Which, as a description intended to convey simplicity, probably fails. Oh well, the code is clearer:

/**

* getHashCode() differs from the standard Java hashcode algorithm.

* It's an shift-add-xor class algorithm, as tested in "Performance

* in Practise of String Hashing Functions", Jobel & Ramakrishna, 1997;

* and is implemented as efficiently as possible.

*/

public static int getHashCode(char inputString[])

{

int result = CCHashTable.seed;

int length = inputString.length;

for (int i = 0; i < length; i++) {

result ^= ( (result << 5) + inputString[i] + (result >>> 2) );

}

return ((result&0x7fffffff)&0x1ff);

}

There were initial performance problems with the code, because I started off just using a modulo to match the hashcode to the size of the hash table in the last line, and modulo’s performance took a significant kick in the pants when Java went from JDK 1.2 to 1.4 – I said it had been a while since I wrote Java code, right? So we go from modulo to a simple masking with 0x1FF which requires that the tablesize for the hash function stay fixed at 512, but that was the optimal level found by Asktis & Sinha anyway, so no real harm there (As far as I can tell by the way, that’s why hash table sizes for Java are now all 2n-1 in size).

Anyway, with the hashcode done, the rest of the code was reasonably simple. Thing about the CCHashTable is that it isn’t so much conscious of the cache as it is dependant upon it. It’s an interesting lesson – the data structures learnt in college are not obselete, but the almost obscene speed of even today’s entry-level desktop processor led to the necessity of caching main memory and the strategies used to pre-fill those caches have a heavy impact on performance which simply didn’t exist ten or twenty years ago. So, for example, linked lists incur a performance hit when you search along them because the cache has no idea where the current item in the list will point to in memory for the next item. However, you can get significant cache-induced performance gains by storing all the data in the structure in a simple contiguous array in memory. The cache sees you walk along the array as you search it, and prefetches the entire thing for you – so your memory access times improve enormously. It’s cheating of a sort I suppose, and eventually this is going to bite us in the ass when we wind up needing gigabyte caches, but for now it’s faster. And if all you’re storing is a string (and that’s what this application called for), then the coding is even easier – you just use a 2-D char array (we don’t use String objects for obvious performance reasons):

public class CCHashTable {

/** Number of slots in CCHashTable. 512 from Asktis&Sinha 2007. */

public static int tableSize = 512;

/** The CCHashTable's contents are stored in a 2D char array */

private char contents[][];

/** Basic constructor. */

public CCHashTable()

{

this.contents = new char[CCHashTable.tableSize][0];

}

}

About the only interesting thing left is the storage of strings in the char array – can’t just drop them in obviously (you’d have no way of seperating them). An EOL character would work, but length-encoded strings are a better idea as that way, when searching for a specific string later, you can call off the search early if you know that the string you’re looking at isn’t a match – by jumping ahead to the next string. Also, you can encode multiple data items this way, by putting them in the character stream between the end of one string and the start of the next. In the specific application, every string had an ID number tagged to it, so the Integer class got encoded into chars, stored at the end of the string and the length of the string was also encoded and stored at the start:

public boolean addString(char inputString[], int id) {

//Sanity check

if ((inputString==null)||(inputString.length==0)) {

return false;

}

int i = CCHashTable.getHashCode(inputString);

int j = 0;

int k = 0;

int length = 0;

int slotlength = this.contents[i].length;

int inputlength = inputString.length;

boolean match = false;

// First of all, is this string in the hash table allready?

while (j < slotlength) {

// if there's nothing left in the slot, jump out

if (this.contents[i][j] == '') {

break;

}

match = true;

// We store the strings as length-encoded, null-terminated

// strings in the char array hence first we swipe the

// length and reconvert it to an int

length = (int) (this.contents[i][j++] | (this.contents[i][j++] < < 16));

// If the string length doesn't match, the strings don't match, so jump out early.

if (inputlength!=length) {

match = false;

} else {

// Now check the array for a character match. Because this

// shows a stride pattern in memory accesses, it's

// cache-friendly.

for (k = j; this.contents[i][k] != ''; k++) {

if (this.contents[i][k] != inputString[k-j]) {

match = false;

break;

}

}

}

// If we found the string, we don't bother to add it, we

// just return back.

if (match) return false;

j += length +3;

}

// Do we need to grow this slot in the array? If so, do so

// now...

while ((slotlength - inputlength) < (j+5)) {

//char tmp[] = new char[slotlength + CCHashTable.slotPageSize];

//System.arraycopy(this.contents[i], 0, tmp, 0, slotlength);

//this.contents[i] = tmp

this.contents[i] = Arrays.copyOf(this.contents[i], slotlength + CCHashTable.slotPageSize);

slotlength = this.contents[i].length;

}

// Add String to end of contents array.

// First encode the length of the string (this allows us to

// skip forward rapidly in the array when string matching if we

// get an early negative)

this.contents[i][j++] = (char)(inputlength & 0xffff);

this.contents[i][j++] = (char)((inputlength > > 16) & 0xffff);

// Now copy over the inputString characters

//System.arraycopy(inputString, 0, this.contents[i], j, inputlength);

//j += inputlength;

for (k = 0; k < inputlength; k++) {

this.contents[i][j++] = inputString[k];

}

// null-terminate

this.contents[i][j++] = '';

// Now encode the id int after the null termination.

this.contents[i][j++] = (char)(id & 0xffff);

this.contents[i][j] = (char)((id > > 16) & 0xffff);

return true;

}

And so searching looked like this:

/**

* getId() is the retrieval method. It takes the inputString

* to check for and if found, it returns the associated id.

* If not found, it returns -1. This method is used on the local

* side of DeviceAtlas and has to run fast.

*/

public int getId(char inputString[]) {

int i = CCHashTable.getHashCode(inputString);

int j = 0;

int k = 0;

int length = 0;

int inputlength = inputString.length;

int slotlength = this.contents[i].length;

boolean match = false;

while (j < slotlength) {

// if there's nothing left in the slot, jump out and return -1

if (this.contents[i][j] == '') {

break;

}

match = true;

// first decode the length of the string

length = (int) (this.contents[i][j++] | (this.contents[i][j++] < < 16));

// if the length does not match, then don't bother checking the characters

if (inputlength != length) {

match = false;

} else {

// then start checking, character by character until we hit

// the null terminator to see if we have a match.

for (k = j; this.contents[i][k] != ''; k++) {

if (this.contents[i][k] != inputString[k-j]) {

// if we find we do not have a match, it'll happen

// earlier than finding we do, so jump right out of

// the loop.

match = false;

break;

}

}

k++;

}

// If we did find a match, return the id int.

if (match) {

return (int) (this.contents[i][k++] | (this.contents[i][k] < < 16));

}

// skip ahead to the next string in the array and do it again.

j += length + 3;

}

return -1;

}

The most interesting part of the Cache Conscious HashTable, however, is that it’s a small part of a larger data structure, a HAT-Trie. More on this in a later post.

Tags: Cache Concious, DeviceAtlas, dotMobi, HashMap, performance

A minor typo: its “Conscious” not “Concious” 🙂

Minor but important! Fixed and thanks mk.

Hello Mr Dennehy,

while I was searching for, additional informations, to the research paper written for the SPIRE 2005 conference by Nikolas Askitis and Justin Zobel, I found Your blog.

I am a student in this http://www.uni-weimar.de/cms/medien/webis/home.html research group,We are currently evaluating the opportunities, to hold large dictionary’s in the main memory, for our retrieval applications.

I would like to ask You, if You would allow us to test your implementation, against our’s, and do some further testing with Your code.

Please let me know, If You would agree and If You would like to get back with me to maybe discuss a little bit.

Thanks a lot, best regards

Hagen Tönnies

[…] HAT-Tries in Java Filed under: General, Java — Mark Dennehy @ 0:06 As I said in an earlier post detailing the CCHashTable, it formed a part of a larger data structure, the HAT-Trie. The HAT-Trie is a recent variant on the […]

I think performance gains are mainly coming from the encoding of integers in the contents instead of creating new Entry objects for each integer value. I would like to see the comparison of HashSet and this implementation (without integer values) under Java 6, my bet is they would be pretty close.

Ok, I also tried to implement a simple String set using same tricks, I don’t know but Java 6 standart HashSet implementation consistently gave better results. The problem is, Set uses String references and comparisons are basicly free because hash values of strigs are cached. So this is basically not very useful if you have the strings stored in memory as well, but could be an option to have a compact storage for character arrays. I also think your benchmark application could be flawed as well,

Hi! I was surfing and found your blog post… nice! I love your blog. 🙂 Cheers! Sandra. R.

The double-encoded HTML in the code samples is an unreadable mess. Please fix.

It was an artifact of the move from wordpress.com to wordpress.org. Should be fixed now.

It should be possible to make CCHashTable even more cache conscious by using byte arrays for storing strings instead of char arrays, this way it’s likely to take half the space when UTF-8 encoded. The idea is simple — the less data you fetch, the more cache hits you get.

And you don’t seem to cache key hash code in the slot bytes along with string length. This would improve string comparison speed and probably increase overall hash table performance.

In the function addString():

…

for (k = 0; k > 16) & 0xffff);

It looks like your null-terminating every string inserted into the

CChash, but the strings are length-encoded, so I don’t think you

have to do this. From what I gather, only the 2D-char array needs to

be nulled. You should try removing the nulls here, it will save

space and may increase performance too. To make this work, the two

bytes used to encode the “id” can be stored after the

length-encode: [length-encode][id][string]